APEIRON COHERENCE

The structure beneath the surface

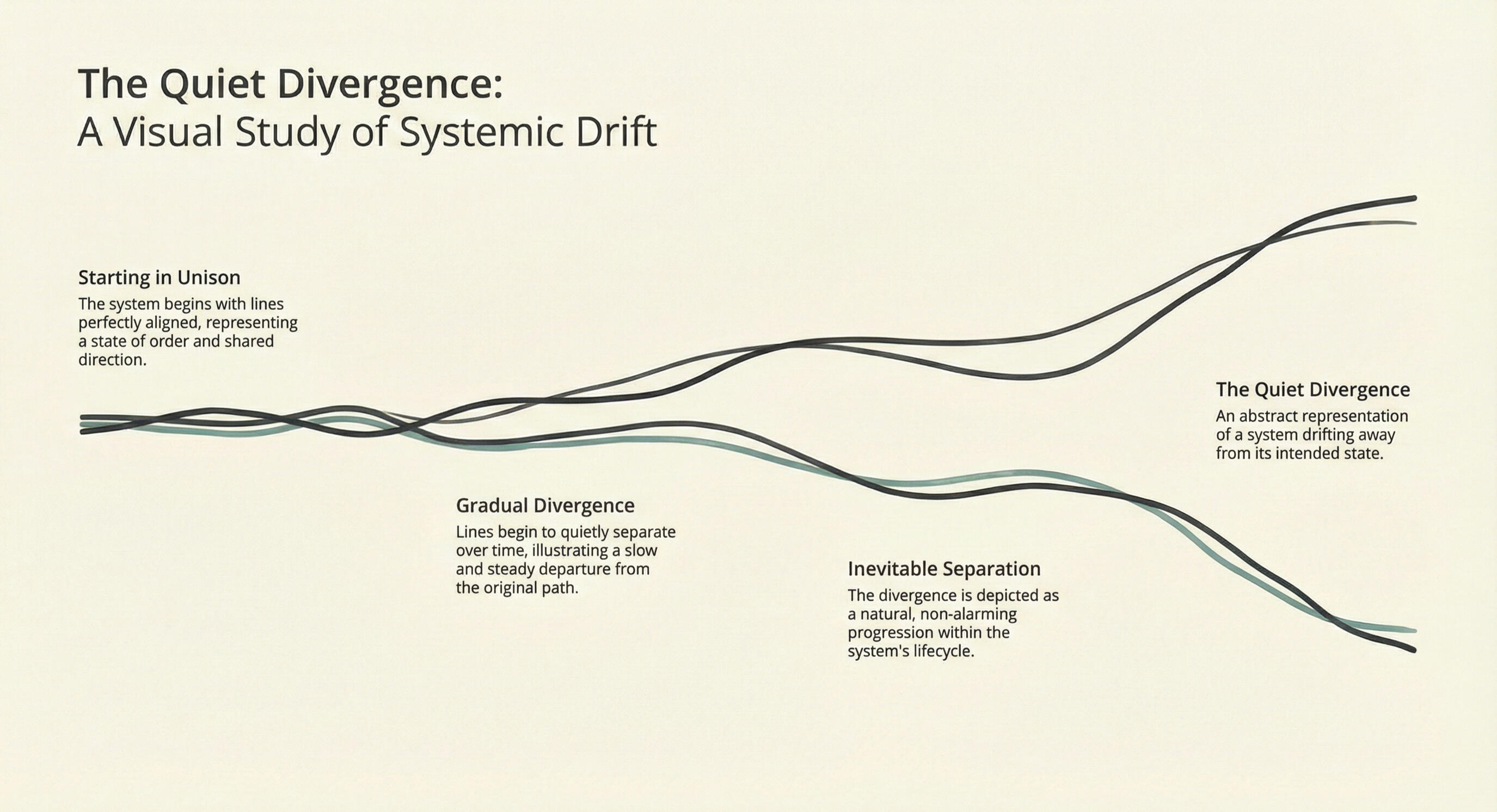

Complex systems don't fail loudly. They drift.We make that drift visible — before it becomes irreversible.

What is coherence?

Coherence is the condition where what a system says, decides, and does are structurally aligned — and remain aligned over time. Most systems lose it quietly, long before anyone notices.

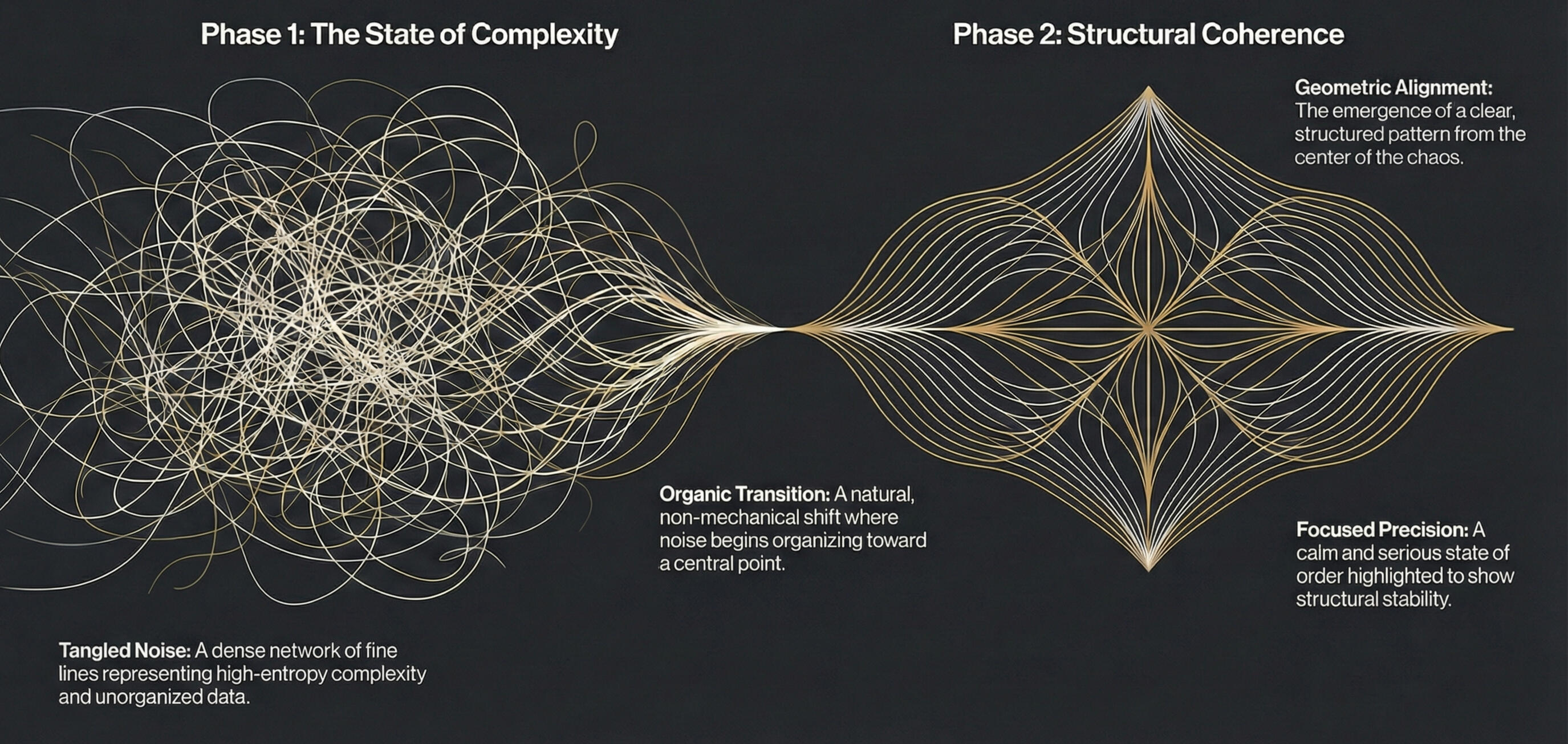

The Problem

Every complex system — a hospital, a legal matter, an organization, an AI — has an internal structure that holds its decisions together. Most of the time, that structure is invisible.It doesn't break dramatically. It erodes — through the accumulation of small, reasonable responses that quietly compound. The logic that once made everything coherent dissolves while the appearance of coherence remains.No one sees it happening. Until it's too late.

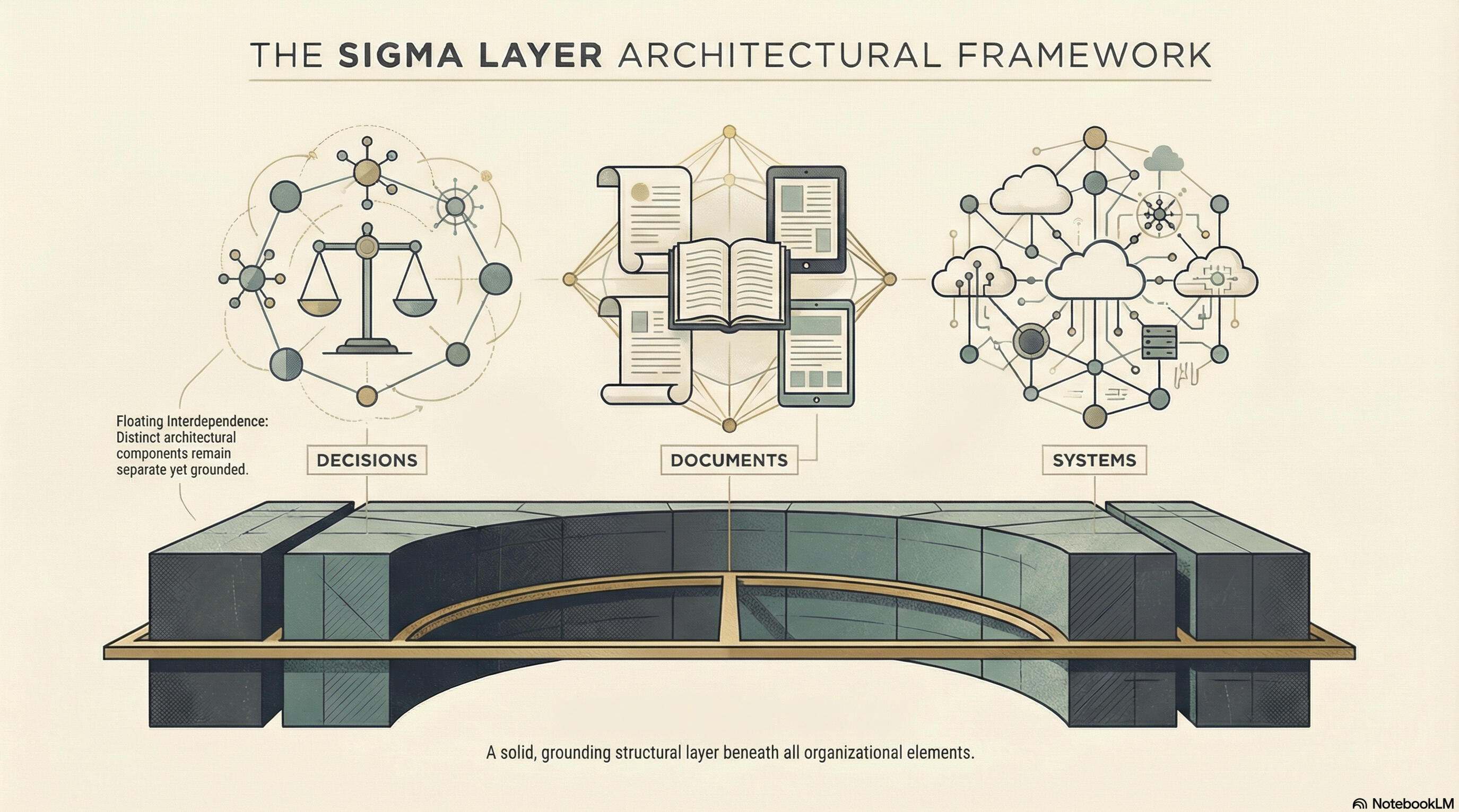

What We Do

The SIGMA framework operates as a structural layer beneath decision-making — revealing the invisible visible before it becomes irreversible.We apply this layer to analyze, diagnose, and monitor complex systems across any domain where meaning accumulates and degrades: healthcare, law, finance, organizational governance, AI systems, and beyond.The diagnostic form adapts to the situation — a formal coherence analysis, a structural audit, an embedded monitoring layer. What doesn't change is the underlying capability: surfacing incoherence before it compounds into something catastrophic.

Proof of Concept

We have applied structural coherence analysis to three domains where silent drift carries the highest consequences.

Sigma

Developed by cognitive architect, Abdullah Yuri Kang, SIGMA was built around a question most systems never ask: can this still be proven wrong?It is a formal framework for structural coherence and refutability. It detects the condition where a system, institution, document, or decision architecture has drifted from being genuinely auditable into being self-validating — still appearing rigorous on the surface, while quietly losing the capacity to be challenged or corrected.Apeiron Coherence is its commercial application.

Try It

We are currently offering document coherence analysis to a small number of selected collaborators.Submit any document — medical records, legal files, meeting notes, surveys, content — and receive a formal coherence report.For sensitive documents, our anonymization tool replaces identifying details with keyed tokens before analysis. Note: Token reintegration is in development.To submit a document, get in touch below.

Contact

Ra Puriri: [email protected]+640273470650 (WhatsApp)